The most interesting type of bugs do not occur during regression testing but when real people use the software. Things become nasty if it happens only after several hours of usage when sometimes the whole application freezes for minutes. If you have a bigger machine with e.g. 40 cores and most of them are busy and you have dozens of processes, things can become challenging to track down, when something goes wrong . Attaching a debugger does not help since it not clear which process is causing the problem and even if you could you would cause issues for already working users on that machine. Using plain ETW tracing is not so easy because the amount of generated data can exceed 10 GB/min. The default Windows Performance Recorder profiles are not an option because they will simply generate too much data. If you have ever tried to open 6+ GB ETL file in WPA it can take 30 minutes until it opens. If you drag and drop around some columns you have to wait some minutes until the UI settles again.

Getting ETW Data The Right Way

Taking data with xperf offers more fined grained control at the command line, but it has also its challenges. If you have e.g. a 2 GB user and a 2 GB kernel session which trace into a circular in memory buffer you can dump the contents with

xperf -flush "NT Kernel Logger" -f kernel.etl xperf -flush "UserSession" -f user.etl xperf -merge user.etl kernel.etl merged.etl

The result looks strange:

For some reason flushing the kernel session takes a very long time (up to 15 minutes) on a busy server. In effect you will have e.g. the first 20s of kernel data, a 6 minutes gap and then the user mode session data which is long after the problem did occur. To work around that you can either flush the user session first or you use the xperf command to flush both sessions at the same time:

xperf -flush "UserSession" -f user.etl -flush "NT Kernel Logger" -f kernel.etl

If you want to profile a machine where you do not know where the issues are coming from you should get at first some “long” term tracing. If you do not record context switch events but you basically resort back to profiling events then you can already get quite far. The default sampling rate is one kHz (a thousand samples/second) which is good for many cases but too much for long sessions. When you expect much CPU activity then you can try to take only every 10ms a sample which will get you much farther. To limit the amount of traced data you have to be very careful from which events you want to get the call stacks. Usually the stack traces are 10 times larger than the actual event data. If you want to create a dedicated trace configuration to spot a very specific issue you need to know which ETW providers cost you how much. Especially the size of the ETL file is relevant. WPA has a very nice overview under System Configuration – Trace Statistics which shows you exactly that

You can paste the data also into Excel to get a more detailed understanding what is costing you how much.

In essence you should enable stack walking only for the events you really care about if you want to keep the profiling file size small. Stack walks cost you a lot of storage (up to 10 times more than the actual event payload). A great thing is that you can configure ETW to omit stack walking for a specific events from an ETW provider with tracelog. This works since Windows 8.1 and is sometimes necessary.

To enable e.g. stack walking for only the CLR Exception event you can use this tracelog call for your user mode session named DotNetSession. The number e13c0d23-ccbc-4e12-931b-d9cc2eee27e4 is the guid for the provider .NET Common Language Runtime because tracelog does not accept pretty ETW provider names. Only guids prefixed with a # make it happy.

tracelog -enableex DotNetSession -guid #e13c0d23-ccbc-4e12-931b-d9cc2eee27e4 -flags 0x8094 -stackwalkFilter -in 1 80

If you want to disable the highest volume events of Microsoft-Windows-Dwm-Core you can configure a list of events which should not traced at all:

tracelog -enableex DWMSession -guid #9e9bba3c-2e38-40cb-99f4-9e8281425164 -flags 0x1 -level 4 -EventIdFilter -out 23 2 9 6 244 8 204 7 14 13 110 19 137 21 111 57 58 66 67 68 112 113 192 193

That line will keep the DWM events which are relevant to the WPA DWM Frame Details graph. Tracelog is part of the Windows SDK but originally it was only part of the Driver SDK. That explains at least partially why it is the only tool that allows to declare ETW event and stackwalk filters. If you filter something in or out you need to pass as first number the number of events you will pass to the filter.

tracelog … -stackwalkFilter -in 1 80

means enable stackwalks for one event with event id 80

tracelog … -EventIdFilter -out 23 2 9 …

will filter out from all enabled events 23 events where the event ids are directly following after the number. You can execute such tracelog statements also on operating systems < Windows 8.1. In that case the event id filter clauses are simply ignored. In my opinion xperf should support that out of the box and I should not have to use another tool to fully configure lightweight ETW trace sessions.

Analyzing ETW Data

After fiddling with the ETW providers and setting up filters for the most common providers I was able to get a snapshot at the time when the server was slow. The UI was not reacting for minutes and I found this interesting call stack consuming one core for nearly three minutes!

When I drill deeper I did see that Gen1 collections were consuming enormous amounts of CPU in the method WKS::allocate_in_older_generation.

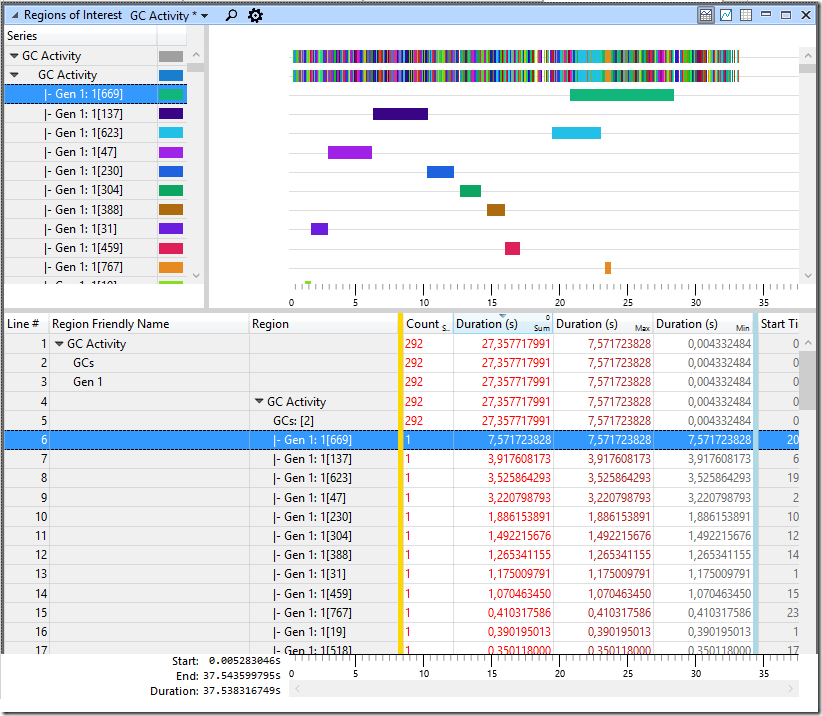

When you have enabled the .NET ETW provider with the keyword 0x1 which tracks GC events and you use my GC Activity Region file you can easily see that some Gen1 collections are taking up to 7s. If such a condition occurs in several different processes at the same time it is no wonder why the server looks largely unresponsive although all other metrics like CPU, Memory, Disc IO and the network are ok.

When I find an interesting call stack it is time to look what the internet can tell me about allocate_in_older_generation. This directly points me to Stackoverflow where someone else had this issue already http://stackoverflow.com/questions/32048094/net-4-6-and-gc-freeze which brings me to https://blogs.msdn.microsoft.com/maoni/2015/08/12/gen2-free-list-changes-in-clr-4-6-gc/ that mentions a .NET 4.6 GC bug. There the .NET GC developer nicely explains what is going on:

… The symptom for this bug is that you are seeing GC taking a lot longer than 4.5 – if you collect ETW events this will show that gen1 GCs are taking a lot longer. …

Based on the data above and that the server was running .NET 4.6.96.0 which was a .NET 4.6 version this explains why sometimes a funny allocation pattern in processes that had already allocated > 2GB of memory the Gen1 GC pause times would sometimes jump from several ms up to seconds. Getting 1000 times slower at random time certainly qualifies as a lot longer. It would even be justified to use more drastic words how much longer this sometimes takes. But ok the bug is known and fixed. If you install .NET 4.6.1 or the upcoming .NET 4.6.2 version you will never see this issue again. It was quite tough to get the right data at the right time but I am getting better at these things while looking at the complete system at once. I am not sure if you could really have found this issue with normal tools if the issue manifests in random processes at random times. If you only concentrate at one part of the system at one time you will most likely fail to identify such systemic issues. When you update your server after some month by doing regular maintenance work the issue would have vanished leaving you back with no real explanation why the long standing issue has suddenly disappeared. Or you would have identified at least a .NET Framework update in retrospect as the fix you were searching for so long.

[…] When Known .Net Bugs Bite You « Alois Kraus […]

LikeLike